Pandas vs PySpark: When Big Data requires the switch

Data Processing Requirements and Constraints

Data scientists and engineers must evaluate the technical capabilities of specific software libraries to process structured datasets effectively. The comparison of Pandas and PySpark represents a critical decision point in the development timeline of a modern data project. Organizations must select the appropriate big data tools to ensure efficient data processing and system stability as datasets grow in volume, velocity, and variety.

The choice between these two specific libraries depends strictly on the physical size of the data and the computational hardware resources available to the engineering team.

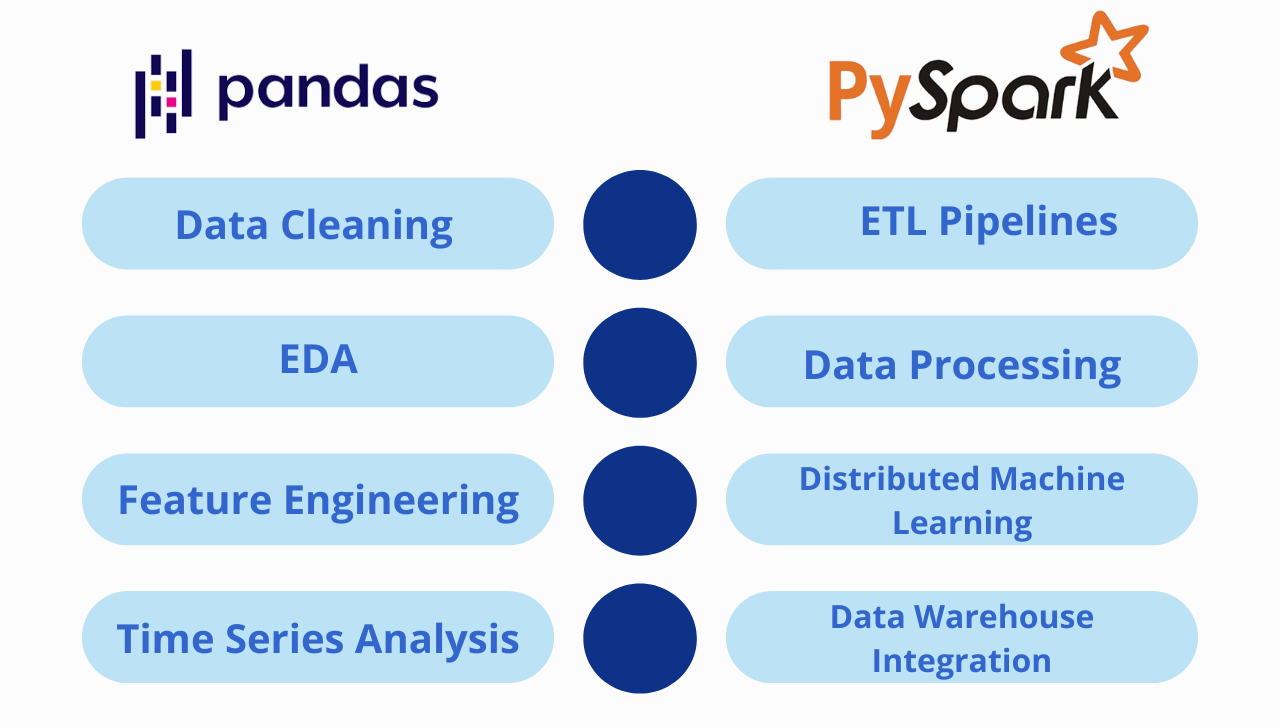

Pandas is an open-source data manipulation and analysis library built for the Python programming language, utilizing a memory-bound DataFrame object. It is used for:

1. Data Cleaning

2. Exploratory Data Analysis

3. Feature Engineering

4. Time Series Analysis

PySpark is the Python application programming interface for Apache Spark, engineered to distribute computational tasks across multiple interconnected computers. It is used for:

1. Big Data ETL Pipelines

2. Streaming Data Processing

3. Distributed Machine Learning

4. Data Warehouse Integration

The Architecture and Execution Model of Pandas

The Pandas software library operates exclusively on a single computing node. When a user loads a dataset into a Pandas DataFrame, the software reads the entire file from the storage drive and stores it directly into the available Random Access Memory (RAM) of that individual machine. This in-memory storage model allows for highly efficient read and write operations because the central processing unit accesses the data directly from the local memory hardware. Pandas uses an eager execution model, meaning that every data transformation or manipulation command is executed immediately upon being called by the Python interpreter.

This architectural design imposes a strict limit on the amount of data the library can process simultaneously. The maximum size of the dataset is strictly bound by the total physical memory installed on the computer, minus the memory required by the operating system and background applications. Furthermore, because Pandas utilizes a single thread of execution by default, it does not distribute computational tasks across multiple processing cores natively. Computational speed relies entirely on the single-core hardware performance of the local processor.

The Architecture and Execution Model of PySpark

PySpark fundamentally differs from single-node libraries by utilizing a distributed computing architecture. It operates by coordinating a network cluster of independent computers, designated as a single master node and multiple worker nodes. When data is ingested into the PySpark environment, the framework partitions the data into smaller, logical segments. It then distributes these discrete segments across the memory and storage drives of the various worker nodes operating in the cluster. Users interact with this distributed data using spark dataframes, which are structurally designed to manage partitioned data across a local area network rather than a single machine.

PySpark employs a lazy evaluation execution model. When a user writes data transformation commands, the software records these commands and constructs a Directed Acyclic Graph, a mathematical representation of the logical execution plan. The actual computation only occurs when an action command is explicitly triggered by the user. At that exact moment, the framework analyzes the graph, optimizes the execution plan, and distributes the physical processing tasks to the worker nodes.

Identifying the Transition Point: Aligning Tools with Goals

The selection between Pandas vs PySpark is a purely technical decision based on data volume, memory capacity, and processing requirements.

Identifying the optimal point to transition between these libraries requires a clear understanding of the project's primary objectives and the distinct roles of the professionals executing the tasks. The decision is fundamentally driven by the end-goal of the data operation, rather than solely by hardware limitations. The transition from Pandas to PySpark represents a shift from localized analysis to scalable engineering.

Pandas is the primary tool utilized by data analysts for the preliminary stages of data processing. Its immediate execution model is explicitly designed for iterative data cleaning, exploratory data analysis, and feature engineering on manageable datasets. Analysts rely on Pandas to quickly identify data anomalies, handle missing values, and format data structures before proceeding to advanced statistical modeling or visualization tasks. The library provides a rapid, interactive environment essential for understanding the underlying patterns within the data.

Conversely, PySpark is the foundational technology utilized by data engineers for constructing resilient, large-scale data pipelines. The primary goal of utilizing PySpark is big data engineering, which involves automating the extraction, transformation, and loading of massive datasets across complex network architectures. Engineers deploy PySpark to process streaming data, execute batch processing jobs on historical data warehouses, and prepare unified datasets for enterprise-level machine learning models. The transition to PySpark becomes necessary when the project goal shifts from understanding a static dataset to operationalizing a continuous flow of large-scale data in a production environment

.jpg)